Unlocking the Value of Data with EIIG

Data lineage tracks the flow of data through an environment, providing an understanding of where the data originated and how it changes as it flows. Algorithms that attempt to explain how an AI arrived at its results do so in terms of the data it starts with. Add a description of that data’s lineage, and combine it with active metadata such as data quality, and confidence in the results will improve dramatically.

The Growth of AI

AI is all around us. There has always been a question of how much to trust the answers your AI gives you; algorithms such as LIME and SHAP were developed to open up the machine learning black box and explain how its decisions were reached. This is generally known as Explainable AI. It is important in many cases, from explaining to a customer why their loan was rejected to explaining to scientists why their model suggested a new drug design.

Over the past few months, generative AI tools like ChatGPT have generated a lot of excitement with their ability to do everything from holding a conversation to writing code or research papers. However, reports in the media describe ChatGPT confidently providing inaccurate answers and inappropriate conversations. As these tools emerge into the mainstream, it’s even more important to explain how they come to their conclusions.

Whatever algorithms are used, at its core Explainable AI seeks to relate the inputs of the machine learning model to the decisions it makes. In all these cases, the explanation stops at the input data; there is no effort to incorporate the source of the data into the description. It’s the role of data engineers and data scientists to gather data, clean it up, and shape it into a form appropriate for input into the machine learning model. Data often moves through multiple databases, ETL tools, and even applications written in languages like Java or Python. The path that data takes through these steps is referred to as its data lineage.

Building Trust in AI Data

Explainable AI tells you what data informed a machine learning algorithm’s decision, but it does not tell you whether that data can be trusted. That’s the role of a data lineage tool. Data lineage tools show you data through its journey from a source system to the machine learning model, detailing exactly how it was transformed along the way.

Even data lineage is not enough. To truly provide confidence in an AI’s results, you also want to look at factors such as the quality of the data and its timeliness. Using this active metadata, you will better understand how various processes impact the quality of data fed to a machine learning model.

With this end-to-end view, you can determine how much to trust that data. You can look at the trustworthiness of the source, and make sure that the intermediate steps did not introduce any errors. If you trust both the model and the data that informs it, your confidence in the results is enhanced.

Example of Using Data Lineage to Strengthen AI Results

Combining data lineage and active metadata capabilities with AI Explainability improves your confidence in your AI’s results. When your AI tells you your building in Beverly Hills is worth $100/square foot, AI Explainability will trace it back to the erroneous zip code it depended on. Data lineage will tell you how that zip code ended up as an input in the first place, showing you where to fix the problem. Your business won’t sell the property at a huge loss, and you’ll know the results will be accurate in the future.

Data Lineage Tool for AI

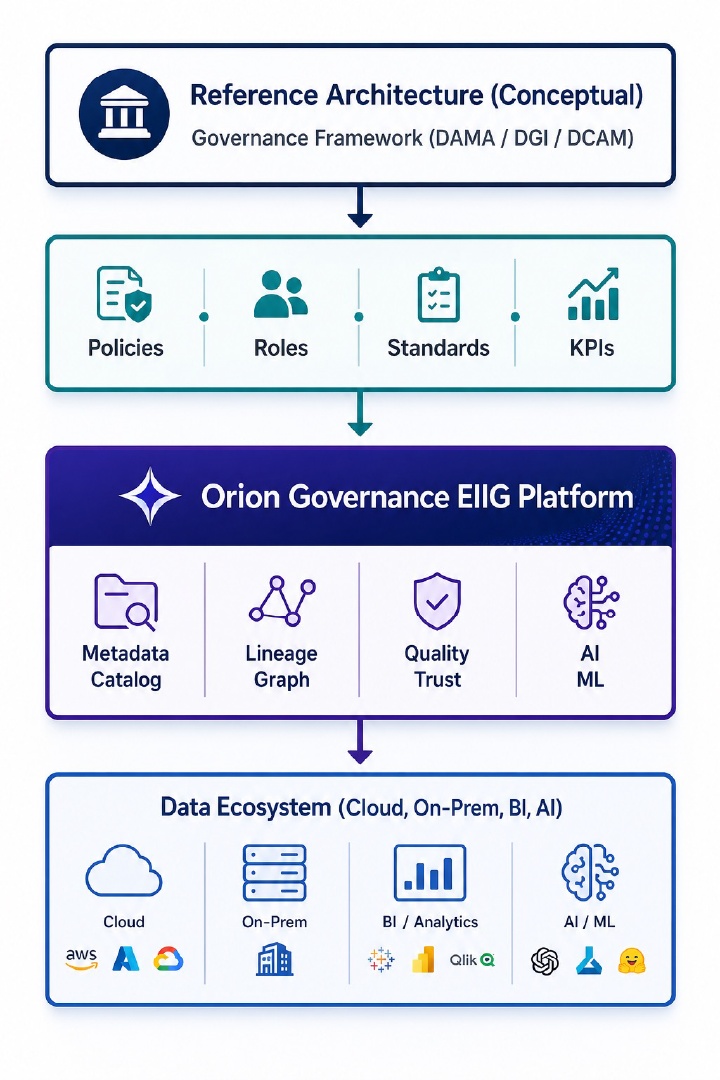

Check out Orion Governance EIIG’s data lineage functionality. EIIG quickly and automatically extracts metadata with a set of 60+ connectors for a range of systems, including mainframes, databases, ETL tools, business intelligence reports, and even Java and Python code. EIIG is also featured as part of the 2023 Machine Learning, AI and Data Landscape. It uses this metadata to build an end-to-end data lineage across systems at a very granular level and visualize it on an easy-to-follow knowledge graph. Overlays of active metadata such as quality scores and tags give you the ability to quickly identify problems and errors.

About the Author: Ed Grossman is the Customer Success Architect at Orion Governance, Inc. Connect with Ed on LinkedIn.

recent posts

How to Leverage Orion Governance Enterprise Information Intelligence Graph to Implement Data Governance Framework

To use Orion Governance Enterprise Information Intelligence Graph (EIIG) effectively, you shouldn’t treat it as “just a tool.” It [...]

Orion Governance Enterprise Information Intelligence Graph Data Quality Baseline Report

A Data Quality (DQ) Baseline Report acts as the "health check" of your data before any remediation begins. In [...]

From Data Lineage to Enterprise Intelligence Fabric

Orion Governance’s Enterprise Information Intelligence Graph (EIIG) offers the definitive data lineage solution: automated, comprehensive, granular, multi-layered, and collaborative. [...]

The Benefits of Using Orion Enterprise Information Intelligence Graph to Accelerate Cloud Migration/ Modernization

Cloud migrations are often framed as a technical exercise — move systems, modernize platforms, decommission legacy tools. But the [...]

Is Duplicate Data Silently Draining Your IT Budget?

Data redundancy isn't just a storage issue—it's a governance, risk, and cost challenge. At Orion Governance, the Enterprise Information [...]

How Orion Governance’s Enterprise Information Intelligence Graph (EIIG) Helps Banks to Meet BCBS 239 Requirements

Orion Governance’s EIIG can help banks meet all 11 BCBS 239 principles that apply to governance/infrastructure, risk data aggregation, [...]